braindecode.models.SignalJEPA_PreLocal#

- class braindecode.models.SignalJEPA_PreLocal(n_outputs=None, n_chans=None, chs_info=None, n_times=None, input_window_seconds=None, sfreq=None, *, n_spat_filters=4, feature_encoder__conv_layers_spec=((8, 32, 8), (16, 2, 2), (32, 2, 2), (64, 2, 2), (64, 2, 2)), drop_prob=0.0, feature_encoder__mode='default', feature_encoder__conv_bias=False, activation=<class 'torch.nn.modules.activation.GELU'>, pos_encoder__spat_dim=30, pos_encoder__time_dim=34, pos_encoder__sfreq_features=1.0, pos_encoder__spat_kwargs=None, transformer__d_model=64, transformer__num_encoder_layers=8, transformer__num_decoder_layers=4, transformer__nhead=8, _init_feature_encoder=True)[source]#

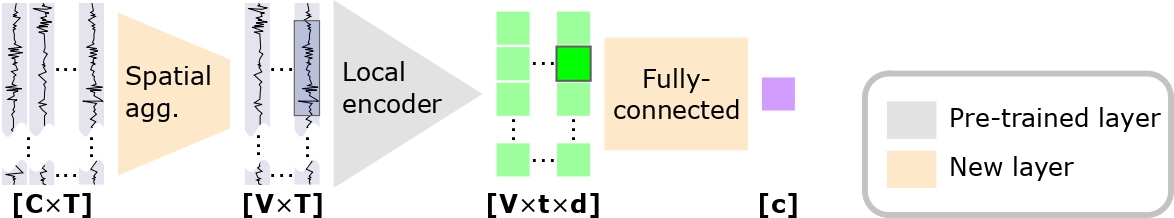

Pre-local downstream architecture introduced in signal-JEPA Guetschel, P et al (2024) [1].

Convolution Channel Foundation Model

This architecture is one of the variants of

SignalJEPAthat can be used for classification purposes.

Added in version 0.9.

Pretrained Weights

Only the feature encoder weights are reused from the shared SSL checkpoints. This model has no channel embedding nor transformer, so

strict=Falseis required at load time to skip the unused keys. Either hub variant works; the_without-chansone is slightly smaller.Important

Pre-trained Weights Available

from braindecode.models import SignalJEPA_PreLocal model = SignalJEPA_PreLocal.from_pretrained( "braindecode/signal-jepa_without-chans", n_chans=22, input_window_seconds=16.0, n_outputs=4, strict=False, )

To push your own trained model to the Hub:

model.push_to_hub( repo_id="username/my-sjepa-model", commit_message="Upload trained SignalJEPA model", )

Requires installing

braindecode[hub]for Hub integration.Usage

from braindecode.models import SignalJEPA_PreLocal model = SignalJEPA_PreLocal( n_chans=22, input_window_seconds=16.0, sfreq=128, n_outputs=4, # e.g., 4-class classification ) # Forward: (batch, n_chans, n_times) -> (batch, n_outputs) output = model(eeg_data)

Warning

Pre-trained at 128 Hz on EEG bandpass-filtered between 0.5 and 40 Hz and rescaled by a factor of \(10^{6}\) (volts to microvolts). Apply the same preprocessing to your data to match the pre-training distribution.

- Parameters:

n_outputs (int) – Number of outputs of the model. This is the number of classes in the case of classification.

n_chans (int) – Number of EEG channels.

chs_info (list of dict) – Information about each individual EEG channel. This should be filled with

info["chs"]. Refer tomne.Infofor more details.n_times (int) – Number of time samples of the input window.

input_window_seconds (float) – Length of the input window in seconds.

sfreq (float) – Sampling frequency of the EEG recordings.

n_spat_filters (

int) – Number of spatial filters.feature_encoder__conv_layers_spec (

Sequence[tuple[int,int,int]]) –tuples have shape

(dim, k, stride)where:dim: number of output channels of the layer (unrelated to EEG channels);k: temporal length of the layer’s kernel;stride: temporal stride of the layer’s kernel.

drop_prob (

float)feature_encoder__mode (

str) – Normalisation mode. Eitherdefaultorlayer_norm.feature_encoder__conv_bias (

bool)activation (

type[Module]) – Activation layer for the feature encoder.pos_encoder__spat_dim (

int) – Number of dimensions to use to encode the spatial position of the patch, i.e. the EEG channel.pos_encoder__time_dim (

int) – Number of dimensions to use to encode the temporal position of the patch.pos_encoder__sfreq_features (

float) – The “downsampled” sampling frequency returned by the feature encoder.pos_encoder__spat_kwargs (

dict|None) – Additional keyword arguments to pass to thenn.Embeddinglayer used to embed the channel names.transformer__d_model (

int) – The number of expected features in the encoder/decoder inputs.transformer__num_encoder_layers (

int) – The number of encoder layers in the transformer.transformer__num_decoder_layers (

int) – The number of decoder layers in the transformer.transformer__nhead (

int) – The number of heads in the multiheadattention models._init_feature_encoder (

bool) – Do not change the default value (used for internal purposes).

- Raises:

ValueError – If some input signal-related parameters are not specified: and can not be inferred.

Notes

If some input signal-related parameters are not specified, there will be an attempt to infer them from the other parameters.

References

[1]Guetschel, P., Moreau, T., & Tangermann, M. (2024). S-JEPA: towards seamless cross-dataset transfer through dynamic spatial attention. In 9th Graz Brain-Computer Interface Conference, https://www.doi.org/10.3217/978-3-99161-014-4-003

Hugging Face Hub integration

When the optional

huggingface_hubpackage is installed, all models automatically gain the ability to be pushed to and loaded from the Hugging Face Hub. Install with:pip install braindecode[hub]

Pushing a model to the Hub:

from braindecode.models import SignalJEPA_PreLocal # Train your model model = SignalJEPA_PreLocal(n_chans=22, n_outputs=4, n_times=1000) # ... training code ... # Push to the Hub model.push_to_hub( repo_id="username/my-signaljepa_prelocal-model", commit_message="Initial model upload", )

Loading a model from the Hub:

from braindecode.models import SignalJEPA_PreLocal # Load pretrained model model = SignalJEPA_PreLocal.from_pretrained("username/my-signaljepa_prelocal-model") # Load with a different number of outputs (head is rebuilt automatically) model = SignalJEPA_PreLocal.from_pretrained("username/my-signaljepa_prelocal-model", n_outputs=4)

Extracting features and replacing the head:

import torch x = torch.randn(1, model.n_chans, model.n_times) # Extract encoder features (consistent dict across all models) out = model(x, return_features=True) features = out["features"] # Replace the classification head model.reset_head(n_outputs=10)

Saving and restoring full configuration:

import json config = model.get_config() # all __init__ params with open("config.json", "w") as f: json.dump(config, f) model2 = SignalJEPA_PreLocal.from_config(config) # reconstruct (no weights)

All model parameters (both EEG-specific and model-specific such as dropout rates, activation functions, number of filters) are automatically saved to the Hub and restored when loading.

See Loading and Adapting Pretrained Foundation Models for a complete tutorial.

Methods

- forward(X, return_features=False)[source]#

Define the computation performed at every call.

Should be overridden by all subclasses.

Note

Although the recipe for forward pass needs to be defined within this function, one should call the

Moduleinstance afterwards instead of this since the former takes care of running the registered hooks while the latter silently ignores them.- Parameters:

X – The description is missing.

return_features – The description is missing.

- classmethod from_pretrained(model=None, n_outputs=None, n_spat_filters=4, **kwargs)[source]#

Instantiate a new model from a pre-trained

SignalJEPAmodel or from Hub.- Parameters:

model (

SignalJEPA|str|Path) – Either a pre-trainedSignalJEPAmodel, a string/Path to a local directory (for Hub-style loading), or None (for Hub loading via kwargs).n_outputs (

int) – Number of classes for the new model. Required when loading from a SignalJEPA model, optional when loading from Hub (will be read from config).n_spat_filters (

int) – Number of spatial filters.**kwargs – Additional keyword arguments passed to the parent class for Hub loading.

- reset_head(n_outputs)[source]#

Replace the classification head for a new number of outputs.

This is called automatically by

from_pretrained()when the user passes ann_outputsthat differs from the saved config. Override in subclasses that need a model-specific head structure.- Parameters:

n_outputs (int) – New number of output classes.

Examples

>>> from braindecode.models import BENDR >>> model = BENDR(n_chans=22, n_times=1000, n_outputs=4) >>> model.reset_head(10) >>> model.n_outputs 10

Added in version 1.4.