braindecode.models.EEGSimpleConv#

- class braindecode.models.EEGSimpleConv(n_outputs=None, n_chans=None, sfreq=None, feature_maps=128, n_convs=2, resampling_freq=80, kernel_size=8, return_feature=False, activation=<class 'torch.nn.modules.activation.ReLU'>, chs_info=None, n_times=None, input_window_seconds=None)[source]#

EEGSimpleConv from Ouahidi, YE et al (2023) [Yassine2023].

Convolution

EEGSimpleConv is a 1D Convolutional Neural Network originally designed for decoding motor imagery from EEG signals. The model aims to have a very simple and straightforward architecture that allows a low latency, while still achieving very competitive performance.

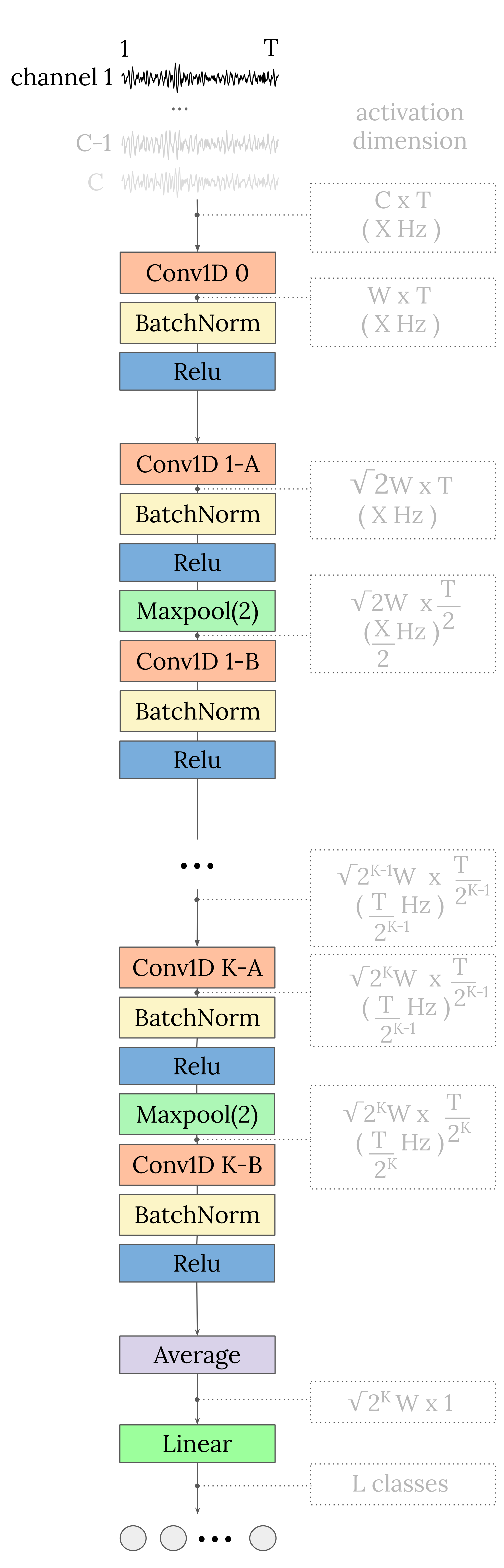

EEG-SimpleConv starts with a 1D convolutional layer, where each EEG channel enters a separate 1D convolutional channel. This is followed by a series of blocks of two 1D convolutional layers. Between the two convolutional layers of each block is a max pooling layer, which downsamples the data by a factor of 2. Each convolution is followed by a batch normalisation layer and a ReLU activation function. Finally, a global average pooling (in the time domain) is performed to obtain a single value per feature map, which is then fed into a linear layer to obtain the final classification prediction output.

The paper and original code with more details about the methodological choices are available at the [Yassine2023] and [Yassine2023Code].

The input shape should be three-dimensional matrix representing the EEG signals.

(batch_size, n_channels, n_timesteps).- Parameters:

n_outputs (int) – Number of outputs of the model. This is the number of classes in the case of classification.

n_chans (int) – Number of EEG channels.

sfreq (float) – Sampling frequency of the EEG recordings.

feature_maps (int) – Number of Feature Maps at the first Convolution, width of the model.

n_convs (int) – Number of blocks of convolutions (2 convolutions per block), depth of the model.

resampling_freq – The description is missing.

kernel_size (int) – Size of the convolutions kernels.

return_feature – The description is missing.

activation (

type[Module]) – Activation function class to apply. Should be a PyTorch activation module class likenn.ReLUornn.ELU. Default isnn.ELU.chs_info (list of dict) – Information about each individual EEG channel. This should be filled with

info["chs"]. Refer tomne.Infofor more details.n_times (int) – Number of time samples of the input window.

input_window_seconds (float) – Length of the input window in seconds.

- Raises:

ValueError – If some input signal-related parameters are not specified: and can not be inferred.

Notes

The authors recommend using the default parameters for MI decoding. Please refer to the original paper and code for more details.

Recommended range for the choice of the hyperparameters, regarding the evaluation paradigm.

Parameter | Within-Subject | Cross-Subject |feature_maps | [64-144] | [64-144] |n_convs | 1 | [2-4] |resampling_freq | [70-100] | [50-80] |kernel_size | [12-17] | [5-8] |An intensive ablation study is included in the paper to understand the of each parameter on the model performance.

Added in version 0.9.

References

[Yassine2023] (1,2)Yassine El Ouahidi, V. Gripon, B. Pasdeloup, G. Bouallegue N. Farrugia, G. Lioi, 2023. A Strong and Simple Deep Learning Baseline for BCI Motor Imagery Decoding. Arxiv preprint. arxiv.org/abs/2309.07159

[Yassine2023Code]Yassine El Ouahidi, V. Gripon, B. Pasdeloup, G. Bouallegue N. Farrugia, G. Lioi, 2023. A Strong and Simple Deep Learning Baseline for BCI Motor Imagery Decoding. GitHub repository. elouayas/EEGSimpleConv.

Hugging Face Hub integration

When the optional

huggingface_hubpackage is installed, all models automatically gain the ability to be pushed to and loaded from the Hugging Face Hub. Install with:pip install braindecode[hub]

Pushing a model to the Hub:

from braindecode.models import EEGSimpleConv # Train your model model = EEGSimpleConv(n_chans=22, n_outputs=4, n_times=1000) # ... training code ... # Push to the Hub model.push_to_hub( repo_id="username/my-eegsimpleconv-model", commit_message="Initial model upload", )

Loading a model from the Hub:

from braindecode.models import EEGSimpleConv # Load pretrained model model = EEGSimpleConv.from_pretrained("username/my-eegsimpleconv-model") # Load with a different number of outputs (head is rebuilt automatically) model = EEGSimpleConv.from_pretrained("username/my-eegsimpleconv-model", n_outputs=4)

Extracting features and replacing the head:

import torch x = torch.randn(1, model.n_chans, model.n_times) # Extract encoder features (consistent dict across all models) out = model(x, return_features=True) features = out["features"] # Replace the classification head model.reset_head(n_outputs=10)

Saving and restoring full configuration:

import json config = model.get_config() # all __init__ params with open("config.json", "w") as f: json.dump(config, f) model2 = EEGSimpleConv.from_config(config) # reconstruct (no weights)

All model parameters (both EEG-specific and model-specific such as dropout rates, activation functions, number of filters) are automatically saved to the Hub and restored when loading.

See Loading and Adapting Pretrained Foundation Models for a complete tutorial.

Methods