braindecode.models.SleepStagerChambon2018#

- class braindecode.models.SleepStagerChambon2018(n_chans=None, sfreq=None, n_conv_chs=8, time_conv_size_s=0.5, max_pool_size_s=0.125, pad_size_s=0.25, activation=<class 'torch.nn.modules.activation.ReLU'>, input_window_seconds=None, n_outputs=5, drop_prob=0.25, apply_batch_norm=False, return_feats=False, chs_info=None, n_times=None)[source]#

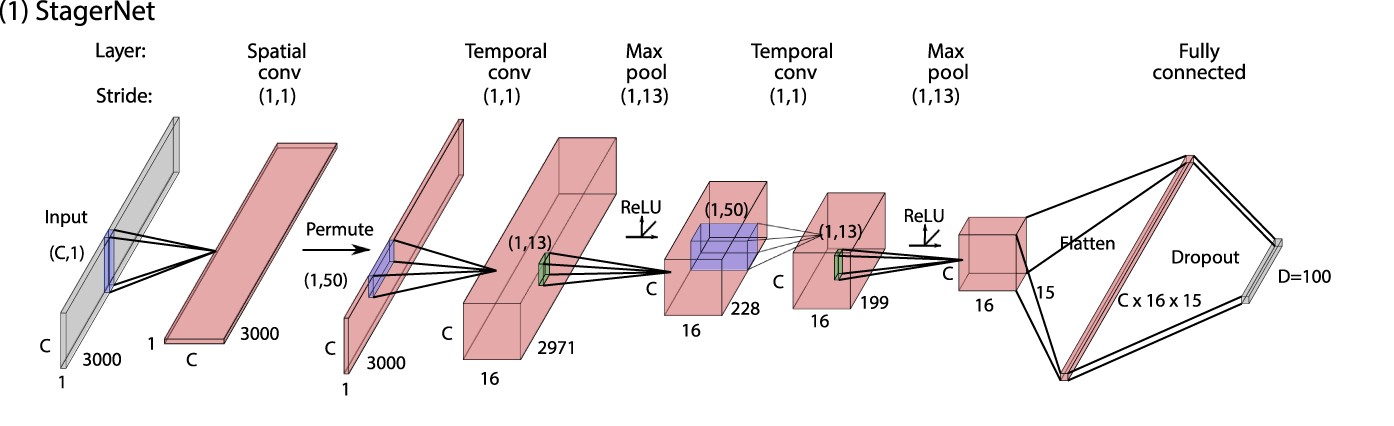

Sleep staging architecture from Chambon et al. (2018) [Chambon2018].

Convolution

Convolutional neural network for sleep staging described in [Chambon2018].

- Parameters:

n_chans (int) – Number of EEG channels.

sfreq (float) – Sampling frequency of the EEG recordings.

n_conv_chs (int) – Number of convolutional channels. Set to 8 in [Chambon2018].

time_conv_size_s (float) – Size of filters in temporal convolution layers, in seconds. Set to 0.5 in [Chambon2018] (64 samples at sfreq=128).

max_pool_size_s (float) – Max pooling size, in seconds. Set to 0.125 in [Chambon2018] (16 samples at sfreq=128).

pad_size_s (float) – Padding size, in seconds. Set to 0.25 in [Chambon2018] (half the temporal convolution kernel size).

activation (

type[Module]) – Activation function class to apply. Should be a PyTorch activation module class likenn.ReLUornn.ELU. Default isnn.ReLU.input_window_seconds (float) – Length of the input window in seconds.

n_outputs (int) – Number of outputs of the model. This is the number of classes in the case of classification.

drop_prob (float) – Dropout rate before the output dense layer.

apply_batch_norm (bool) – If True, apply batch normalization after both temporal convolutional layers.

return_feats (bool) – If True, return the features, i.e. the output of the feature extractor (before the final linear layer). If False, pass the features through the final linear layer.

chs_info (list of dict) – Information about each individual EEG channel. This should be filled with

info["chs"]. Refer tomne.Infofor more details.n_times (int) – Number of time samples of the input window.

- Raises:

ValueError – If some input signal-related parameters are not specified: and can not be inferred.

Notes

If some input signal-related parameters are not specified, there will be an attempt to infer them from the other parameters.

References

[Chambon2018] (1,2,3,4,5,6)Chambon, S., Galtier, M. N., Arnal, P. J., Wainrib, G., & Gramfort, A. (2018). A deep learning architecture for temporal sleep stage classification using multivariate and multimodal time series. IEEE Transactions on Neural Systems and Rehabilitation Engineering, 26(4), 758-769.

Hugging Face Hub integration

When the optional

huggingface_hubpackage is installed, all models automatically gain the ability to be pushed to and loaded from the Hugging Face Hub. Install with:pip install braindecode[hub]

Pushing a model to the Hub:

from braindecode.models import SleepStagerChambon2018 # Train your model model = SleepStagerChambon2018(n_chans=22, n_outputs=4, n_times=1000) # ... training code ... # Push to the Hub model.push_to_hub( repo_id="username/my-sleepstagerchambon2018-model", commit_message="Initial model upload", )

Loading a model from the Hub:

from braindecode.models import SleepStagerChambon2018 # Load pretrained model model = SleepStagerChambon2018.from_pretrained("username/my-sleepstagerchambon2018-model") # Load with a different number of outputs (head is rebuilt automatically) model = SleepStagerChambon2018.from_pretrained("username/my-sleepstagerchambon2018-model", n_outputs=4)

Extracting features and replacing the head:

import torch x = torch.randn(1, model.n_chans, model.n_times) # Extract encoder features (consistent dict across all models) out = model(x, return_features=True) features = out["features"] # Replace the classification head model.reset_head(n_outputs=10)

Saving and restoring full configuration:

import json config = model.get_config() # all __init__ params with open("config.json", "w") as f: json.dump(config, f) model2 = SleepStagerChambon2018.from_config(config) # reconstruct (no weights)

All model parameters (both EEG-specific and model-specific such as dropout rates, activation functions, number of filters) are automatically saved to the Hub and restored when loading.

See Loading and Adapting Pretrained Foundation Models for a complete tutorial.

Methods

Examples using braindecode.models.SleepStagerChambon2018#

Self-supervised learning on EEG with relative positioning

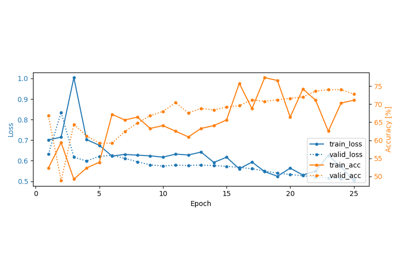

Sleep staging on the Sleep Physionet dataset using Chambon2018 network