braindecode.models.EEGITNet#

- class braindecode.models.EEGITNet(n_outputs=None, n_chans=None, n_times=None, chs_info=None, input_window_seconds=None, sfreq=None, n_filters_time=2, kernel_length=16, pool_kernel=4, tcn_in_channel=14, tcn_kernel_size=4, tcn_padding=3, drop_prob=0.4, tcn_dilatation=1, activation=<class 'torch.nn.modules.activation.ELU'>)[source]#

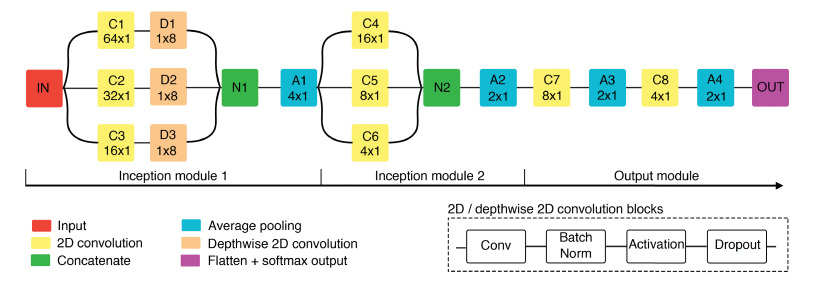

EEG-ITNet from Salami, et al (2022) [Salami2022]

Convolution Recurrent

EEG-ITNet: An Explainable Inception Temporal Convolutional Network for motor imagery classification from Salami et al. 2022.

See [Salami2022] for details.

Code adapted from abbassalami/eeg-itnet

- Parameters:

n_outputs (int) – Number of outputs of the model. This is the number of classes in the case of classification.

n_chans (int) – Number of EEG channels.

n_times (int) – Number of time samples of the input window.

chs_info (list of dict) – Information about each individual EEG channel. This should be filled with

info["chs"]. Refer tomne.Infofor more details.input_window_seconds (float) – Length of the input window in seconds.

sfreq (float) – Sampling frequency of the EEG recordings.

n_filters_time (

int) – The description is missing.kernel_length (

int) – Kernel length for inception branches. Determines the temporal receptive field. Default is 16.pool_kernel (

int) – Pooling kernel size for the average pooling layer. Default is 4.tcn_in_channel (

int) – Number of input channels for Temporal Convolutional (TC) blocks. Default is 14.tcn_kernel_size (

int) – Kernel size for the TC blocks. Determines the temporal receptive field. Default is 4.tcn_padding (

int) – Padding size for the TC blocks to maintain the input dimensions. Default is 3.drop_prob (

float) – Dropout probability applied after certain layers to prevent overfitting. Default is 0.4.tcn_dilatation (

int) – Dilation rate for the first TC block. Subsequent blocks will have dilation rates multiplied by powers of 2. Default is 1.activation (

type[Module]) – Activation function class to apply. Should be a PyTorch activation module class likenn.ReLUornn.ELU. Default isnn.ELU.

- Raises:

ValueError – If some input signal-related parameters are not specified: and can not be inferred.

Notes

This implementation is not guaranteed to be correct, has not been checked by original authors, only reimplemented from the paper based on author implementation.

References

Hugging Face Hub integration

When the optional

huggingface_hubpackage is installed, all models automatically gain the ability to be pushed to and loaded from the Hugging Face Hub. Install with:pip install braindecode[hub]

Pushing a model to the Hub:

from braindecode.models import EEGITNet # Train your model model = EEGITNet(n_chans=22, n_outputs=4, n_times=1000) # ... training code ... # Push to the Hub model.push_to_hub( repo_id="username/my-eegitnet-model", commit_message="Initial model upload", )

Loading a model from the Hub:

from braindecode.models import EEGITNet # Load pretrained model model = EEGITNet.from_pretrained("username/my-eegitnet-model") # Load with a different number of outputs (head is rebuilt automatically) model = EEGITNet.from_pretrained("username/my-eegitnet-model", n_outputs=4)

Extracting features and replacing the head:

import torch x = torch.randn(1, model.n_chans, model.n_times) # Extract encoder features (consistent dict across all models) out = model(x, return_features=True) features = out["features"] # Replace the classification head model.reset_head(n_outputs=10)

Saving and restoring full configuration:

import json config = model.get_config() # all __init__ params with open("config.json", "w") as f: json.dump(config, f) model2 = EEGITNet.from_config(config) # reconstruct (no weights)

All model parameters (both EEG-specific and model-specific such as dropout rates, activation functions, number of filters) are automatically saved to the Hub and restored when loading.

See Loading and Adapting Pretrained Foundation Models for a complete tutorial.