Braindecode — Decode raw EEG, ECoG and MEG with deep learning#

Decode raw brain signals with deep learning.

A PyTorch-native toolbox for end-to-end neural decoding. Load a dataset, pick a published model, train. Same scikit-learn API you already know.

For neuroscientists who want to work with deep learning, and deep learning researchers who want to work with neurophysiological data.

Everything you need to go from signal to prediction.

Built to plug into the EEG ecosystem you already use: every MOABB dataset, every MNE-Python preprocessing function, every scikit-learn training loop, plus 700+ BIDS datasets via EEGDash. One library, no lock-in.

Decode raw electrophysiology

End-to-end models go straight from raw EEG/ECoG/MEG to predictions. No hand-crafted features required.

ModelsPyTorch-native, sklearn-friendly

Built on PyTorch and skorch with a Lightning-friendly API. Drop into any training loop you already know.

API referenceEvery MOABB dataset, drop-in

All 150+ MOABB datasets work out of the box, with MOABBDataset(...). Plus TUH, HBN, Sleep Physionet, BCI Competition IV, BIDS, and 700+ datasets via sibling project EEGDash.

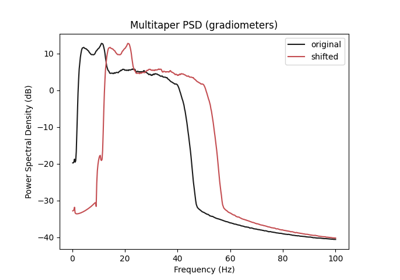

Preprocessing & augmentations

Fully compatible with every MNE preprocessing function and with EEGPrep, plus exponential standardization and 20+ EEG augmentations.

AugmentationCurated model zoo

EEGNeX, ConvNets, ATCNet, EEGConformer, foundation models. 60+ architectures reproduced from the original papers.

Browse the zooPlays well with MNE-Python

Native interop with mne.io.Raw and mne.Epochs. Reuse the analysis tools you already know for QC and visualization.

MNE bridgeTrain a model in 15 lines.

From raw motor-imagery EEG to a trained classifier. Each step is a stand-alone module; swap any piece without touching the others.

- 01 Load the dataset Pull BCI Competition IV-2a via MOABB.

- 02 Preprocess & window Bandpass-filter, then cut motor-imagery trials.

- 03 Pick a model EEGNeX with 22 channels, 4 classes, 4.5 s @ 250 Hz.

- 04 Train skorch wrapper with sklearn-style fit().

A library of published architectures.

Every model is reproduced from its original paper and ships with a consistent constructor. The shared EEGModuleMixin takes any of n_chans, n_outputs, n_times, chs_info, input_window_seconds or sfreq; the missing ones are derived for you.

Hugging Face Hub

Pretrained, on the Hub.

Every Braindecode model implements from_pretrained and push_to_hub, the same unified API as transformers. Models serialize as a config-driven JSON + safetensors pair (diffable, audit-friendly), and datasets ride on Zarr so push / pull stream chunks lazily without re-uploading the full archive.

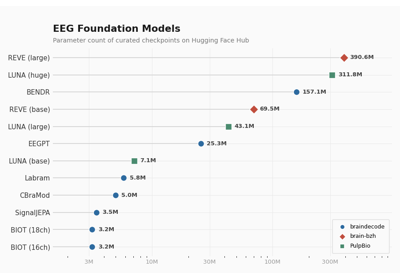

Curated foundation weights

BENDR, EEGPT, Signal-JEPA, LaBraM, BIOT and more. Pretrained checkpoints stored as safetensors alongside a readable JSON config, so fine-tuning is reproducible and weight provenance stays auditable. Curated and benchmarked under OpenEEG-Bench.

model = EEGPT.from_pretrained("braindecode/eegpt")

Visit huggingface.co/braindecode →

Share & pull EEG datasets

Push WindowsDataset, EEGWindowsDataset or RawDataset objects to the Hub with one call. Storage is Zarr-backed, so subsequent pulls only fetch the chunks you read. Useful for multi-GB sessions over flaky links. EEGDash mirrors 700+ BIDS-ready EEG/MEG datasets the same way.

ds.push_to_hub("alice/bnci2014_001")

Browse EEGDash datasets →

What you can decode

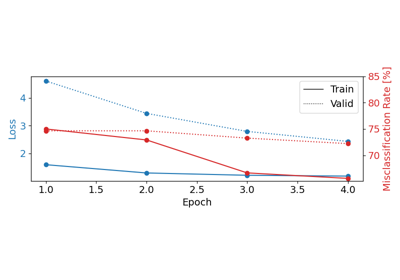

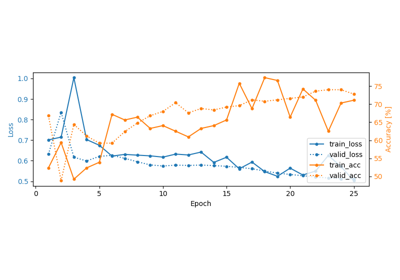

Motor imagery

Trialwise decoding on BCI IV-2a

Motor imagery

Trialwise decoding on BCI IV-2a

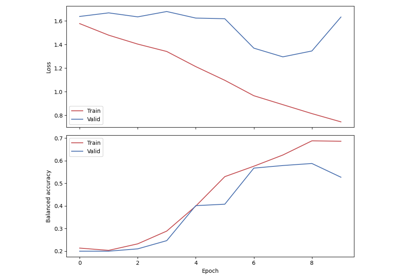

Sleep staging

Chambon 2018 on Sleep Physionet

Sleep staging

Chambon 2018 on Sleep Physionet

ECoG

Finger-flexion regression on BCI IV-4

ECoG

Finger-flexion regression on BCI IV-4

Foundation FT

Fine-tune Signal-JEPA

Foundation FT

Fine-tune Signal-JEPA

Hugging Face

Load pretrained foundation models

Hugging Face

Load pretrained foundation models

Hugging Face

Push & pull datasets via the Hub

Hugging Face

Push & pull datasets via the Hub

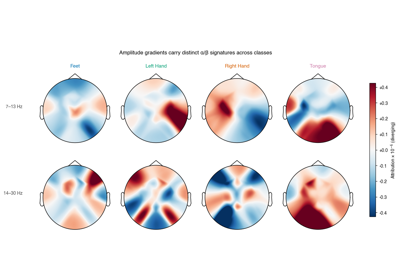

XAI

Interpretability sanity checks

XAI

Interpretability sanity checks

Self-supervised

Relative positioning pretraining

Self-supervised

Relative positioning pretraining

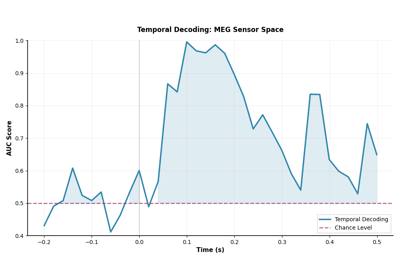

MEG

Temporal generalization on MEG

MEG

Temporal generalization on MEG

Augmentation

EEG augmentation search

Augmentation

EEG augmentation search

A unified API, nine modules.

From raw recording to trained checkpoint, the same nine modules cover every task: classification, regression, sleep staging, self-supervised pretraining.

Built and shared with neighbors.

Open neural decoding doesn't live alone. Braindecode plugs into a wider open-source neuroscience stack, ships sibling projects that round out the data story, and is built on top of by other research toolkits.

mne.io.Raw or mne.Epochs works as input.braindecode under its models extras and pulls in Braindecode's LABRAM and EEGPT backbones.Building on Braindecode? See the full list of dependent projects on GitHub →